Home

Spotlight

Welcome

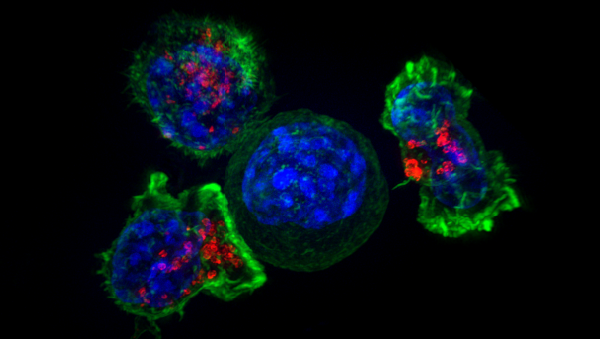

Welcome to the Department of Computer Science at Princeton University. Princeton has been at the forefront of computing since Alan Turing, Alonzo Church and John von Neumann were among its residents. Our department is home to about 60 faculty members, with strong groups in theory, networks/systems, vision/graphics, architecture/compilers, programming languages, security/policy, machine learning, natural language processing, human-computer interaction, robotics, and computational biology.